↑

概述

Llama 3.1 是 Meta 推出的一款全新先进模型,提供 8B、70B 和 405B 参数尺寸。

模型

| 名称 | 大小 | 上下文长度 | 输入类型 | Ollama 下载命令 |

|---|---|---|---|---|

| llama3.1:latest | 4.9 GB | 128 K | Text | ollama pull llama3.1:latest |

| llama3.1:8b | 4.9 GB | 128 K | Text | ollama pull llama3.1:8b |

| llama3.1:70b | 43 GB | 128 K | Text | ollama pull llama3.1:70b |

| llama3.1:405b | 243 GB | 128 K | Text | ollama pull llama3.1:405b |

| llama3.1:8b-instruct-q2_K | 3.2 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q2_K |

| llama3.1:8b-instruct-q3_K_S | 3.7 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q3_K_S |

| llama3.1:8b-instruct-q3_K_M | 4.0 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q3_K_M |

| llama3.1:8b-instruct-q3_K_L | 4.3 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q3_K_L |

| llama3.1:8b-instruct-q4_0 | 4.7 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q4_0 |

| llama3.1:8b-instruct-q4_1 | 5.1 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q4_1 |

| llama3.1:8b-instruct-q4_K_S | 4.7 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q4_K_S |

| llama3.1:8b-instruct-q4_K_M | 4.9 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q4_K_M |

| llama3.1:8b-instruct-q5_0 | 5.6 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q5_0 |

| llama3.1:8b-instruct-q5_1 | 6.1 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q5_1 |

| llama3.1:8b-instruct-q5_K_S | 5.6 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q5_K_S |

| llama3.1:8b-instruct-q5_K_M | 5.7 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q5_K_M |

| llama3.1:8b-instruct-q6_K | 6.6 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q6_K |

| llama3.1:8b-instruct-q8_0 | 8.5 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-q8_0 |

| llama3.1:8b-instruct-fp16 | 16 GB | 128 K | Text | ollama pull llama3.1:8b-instruct-fp16 |

| llama3.1:8b-text-q2_K | 3.2 GB | 128 K | Text | ollama pull llama3.1:8b-text-q2_K |

| llama3.1:8b-text-q3_K_S | 3.7 GB | 128 K | Text | ollama pull llama3.1:8b-text-q3_K_S |

| llama3.1:8b-text-q3_K_M | 4.0 GB | 128 K | Text | ollama pull llama3.1:8b-text-q3_K_M |

| llama3.1:8b-text-q3_K_L | 4.3 GB | 128 K | Text | ollama pull llama3.1:8b-text-q3_K_L |

| llama3.1:8b-text-q4_0 | 4.7 GB | 128 K | Text | ollama pull llama3.1:8b-text-q4_0 |

| llama3.1:8b-text-q4_1 | 5.1 GB | 128 K | Text | ollama pull llama3.1:8b-text-q4_1 |

| llama3.1:8b-text-q4_K_S | 4.7 GB | 128 K | Text | ollama pull llama3.1:8b-text-q4_K_S |

| llama3.1:8b-text-q4_K_M | 4.9 GB | 128 K | Text | ollama pull llama3.1:8b-text-q4_K_M |

| llama3.1:8b-text-q5_0 | 5.6 GB | 128 K | Text | ollama pull llama3.1:8b-text-q5_0 |

| llama3.1:8b-text-q5_1 | 6.1 GB | 128 K | Text | ollama pull llama3.1:8b-text-q5_1 |

| llama3.1:8b-text-q5_K_S | 5.6 GB | 128 K | Text | ollama pull llama3.1:8b-text-q5_K_S |

| llama3.1:8b-text-q5_K_M | 5.7 GB | 128 K | Text | ollama pull llama3.1:8b-text-q5_K_M |

| llama3.1:8b-text-q6_K | 6.6 GB | 128 K | Text | ollama pull llama3.1:8b-text-q6_K |

| llama3.1:8b-text-q8_0 | 8.5 GB | 128 K | Text | ollama pull llama3.1:8b-text-q8_0 |

| llama3.1:8b-text-fp16 | 16 GB | 128 K | Text | ollama pull llama3.1:8b-text-fp16 |

| llama3.1:70b-instruct-q2_K | 26 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q2_K |

| llama3.1:70b-instruct-q3_K_S | 31 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q3_K_S |

| llama3.1:70b-instruct-q3_K_M | 34 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q3_K_M |

| llama3.1:70b-instruct-q3_K_L | 37 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q3_K_L |

| llama3.1:70b-instruct-q4_0 | 40 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q4_0 |

| llama3.1:70b-instruct-q4_1 | 44 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q4_1 |

| llama3.1:70b-instruct-q4_K_S | 40 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q4_K_S |

| llama3.1:70b-instruct-q4_K_M | 43 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q4_K_M |

| llama3.1:70b-instruct-q5_0 | 49 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q5_0 |

| llama3.1:70b-instruct-q5_1 | 53 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q5_1 |

| llama3.1:70b-instruct-q5_K_S | 49 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q5_K_S |

| llama3.1:70b-instruct-q5_K_M | 50 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q5_K_M |

| llama3.1:70b-instruct-q6_K | 58 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q6_K |

| llama3.1:70b-instruct-q8_0 | 75 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-q8_0 |

| llama3.1:70b-instruct-fp16 | 141 GB | 128 K | Text | ollama pull llama3.1:70b-instruct-fp16 |

| llama3.1:70b-text-q2_K | 26 GB | 128 K | Text | ollama pull llama3.1:70b-text-q2_K |

| llama3.1:70b-text-q3_K_S | 31 GB | 128 K | Text | ollama pull llama3.1:70b-text-q3_K_S |

| llama3.1:70b-text-q3_K_M | 34 GB | 128 K | Text | ollama pull llama3.1:70b-text-q3_K_M |

| llama3.1:70b-text-q3_K_L | 37 GB | 128 K | Text | ollama pull llama3.1:70b-text-q3_K_L |

| llama3.1:70b-text-q4_0 | 40 GB | 128 K | Text | ollama pull llama3.1:70b-text-q4_0 |

| llama3.1:70b-text-q4_1 | 44 GB | 128 K | Text | ollama pull llama3.1:70b-text-q4_1 |

| llama3.1:70b-text-q4_K_S | 40 GB | 128 K | Text | ollama pull llama3.1:70b-text-q4_K_S |

| llama3.1:70b-text-q4_K_M | 43 GB | 128 K | Text | ollama pull llama3.1:70b-text-q4_K_M |

| llama3.1:70b-text-q5_0 | 49 GB | 128 K | Text | ollama pull llama3.1:70b-text-q5_0 |

| llama3.1:70b-text-q5_1 | 53 GB | 128 K | Text | ollama pull llama3.1:70b-text-q5_1 |

| llama3.1:70b-text-q5_K_S | 49 GB | 128 K | Text | ollama pull llama3.1:70b-text-q5_K_S |

| llama3.1:70b-text-q5_K_M | 50 GB | 128 K | Text | ollama pull llama3.1:70b-text-q5_K_M |

| llama3.1:70b-text-q6_K | 58 GB | 128 K | Text | ollama pull llama3.1:70b-text-q6_K |

| llama3.1:70b-text-q8_0 | 75 GB | 128 K | Text | ollama pull llama3.1:70b-text-q8_0 |

| llama3.1:70b-text-fp16 | 141 GB | 128 K | Text | ollama pull llama3.1:70b-text-fp16 |

| llama3.1:405b-instruct-q2_K | 149 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q2_K |

| llama3.1:405b-instruct-q3_K_S | 175 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q3_K_S |

| llama3.1:405b-instruct-q3_K_M | 195 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q3_K_M |

| llama3.1:405b-instruct-q3_K_L | 213 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q3_K_L |

| llama3.1:405b-instruct-q4_0 | 229 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q4_0 |

| llama3.1:405b-instruct-q4_1 | 254 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q4_1 |

| llama3.1:405b-instruct-q4_K_S | 231 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q4_K_S |

| llama3.1:405b-instruct-q4_K_M | 243 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q4_K_M |

| llama3.1:405b-instruct-q5_0 | 279 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q5_0 |

| llama3.1:405b-instruct-q5_1 | 305 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q5_1 |

| llama3.1:405b-instruct-q5_K_S | 279 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q5_K_S |

| llama3.1:405b-instruct-q5_K_M | 287 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q5_K_M |

| llama3.1:405b-instruct-q6_K | 333 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q6_K |

| llama3.1:405b-instruct-q8_0 | 431 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-q8_0 |

| llama3.1:405b-instruct-fp16 | 812 GB | 128 K | Text | ollama pull llama3.1:405b-instruct-fp16 |

| llama3.1:405b-text-q2_K | 149 GB | 128 K | Text | ollama pull llama3.1:405b-text-q2_K |

| llama3.1:405b-text-q3_K_S | 175 GB | 128 K | Text | ollama pull llama3.1:405b-text-q3_K_S |

| llama3.1:405b-text-q3_K_M | 195 GB | 128 K | Text | ollama pull llama3.1:405b-text-q3_K_M |

| llama3.1:405b-text-q3_K_L | 213 GB | 128 K | Text | ollama pull llama3.1:405b-text-q3_K_L |

| llama3.1:405b-text-q4_0 | 229 GB | 128 K | Text | ollama pull llama3.1:405b-text-q4_0 |

| llama3.1:405b-text-q4_1 | 254 GB | 128 K | Text | ollama pull llama3.1:405b-text-q4_1 |

| llama3.1:405b-text-q4_K_S | 231 GB | 128 K | Text | ollama pull llama3.1:405b-text-q4_K_S |

| llama3.1:405b-text-q4_K_M | 243 GB | 128 K | Text | ollama pull llama3.1:405b-text-q4_K_M |

| llama3.1:405b-text-q5_0 | 279 GB | 128 K | Text | ollama pull llama3.1:405b-text-q5_0 |

| llama3.1:405b-text-q5_1 | 305 GB | 128 K | Text | ollama pull llama3.1:405b-text-q5_1 |

| llama3.1:405b-text-q5_K_S | 279 GB | 128 K | Text | ollama pull llama3.1:405b-text-q5_K_S |

| llama3.1:405b-text-q5_K_M | 287 GB | 128 K | Text | ollama pull llama3.1:405b-text-q5_K_M |

| llama3.1:405b-text-q6_K | 333 GB | 128 K | Text | ollama pull llama3.1:405b-text-q6_K |

| llama3.1:405b-text-q8_0 | 431 GB | 128 K | Text | ollama pull llama3.1:405b-text-q8_0 |

| llama3.1:405b-text-fp16 | 812 GB | 128 K | Text | ollama pull llama3.1:405b-text-fp16 |

详情

Llama 3.1 405B 是第一个公开可用的模型,在常识、可操纵性、数学、工具使用和多语言翻译等最先进的功能方面可与顶级 AI 模型相媲美。

8B 和 70B 模型的升级版支持多语言,上下文长度显著增加至 128K,并采用了最先进的工具,整体推理能力也更加强大。这使得 Meta 的最新模型能够支持高级用例,例如长篇文本摘要、多语言对话代理和编程助手。

Meta 还对其许可证进行了更改,允许开发人员使用 Llama 模型(包括 405B 模型)的输出来改进其他模型。

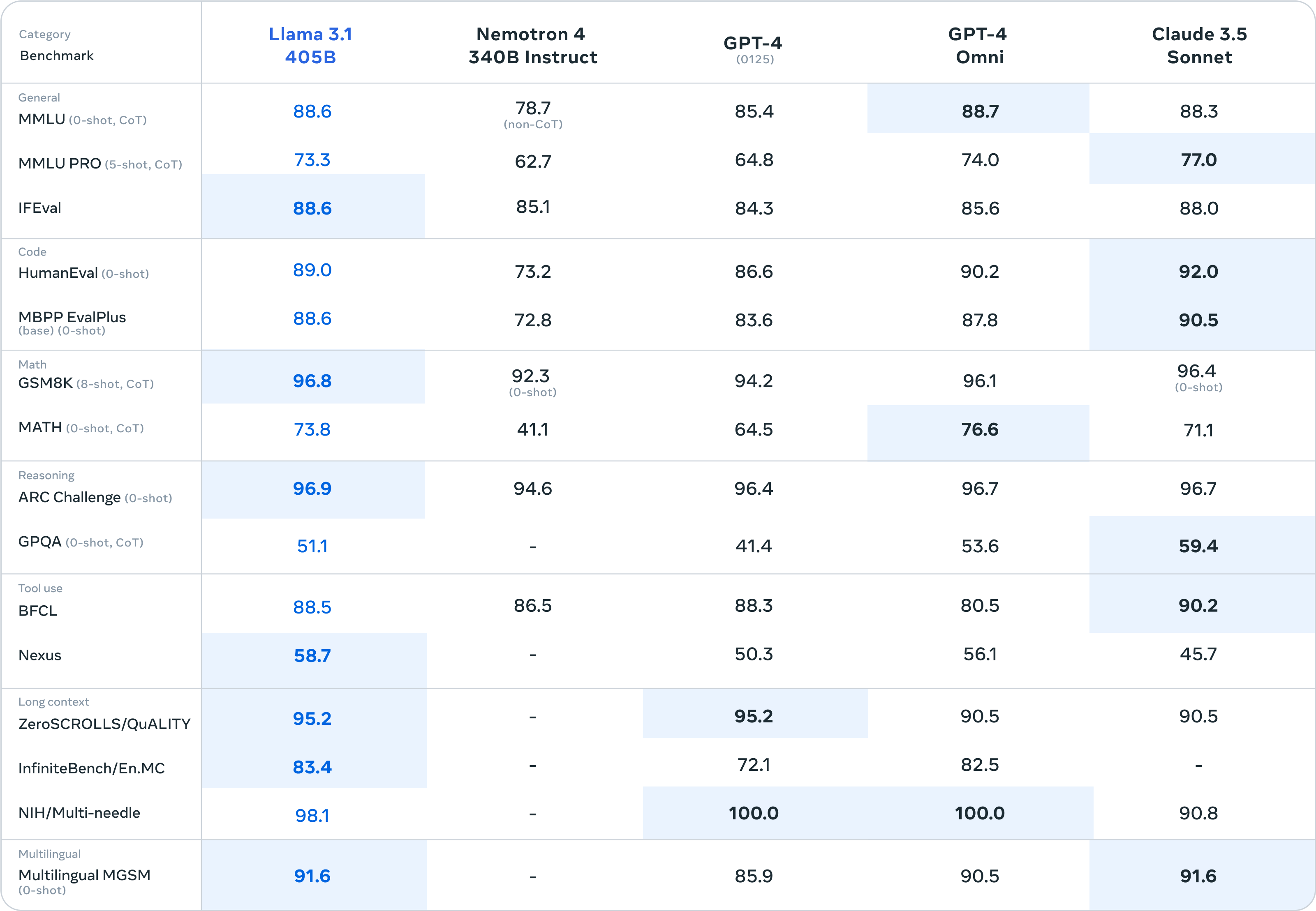

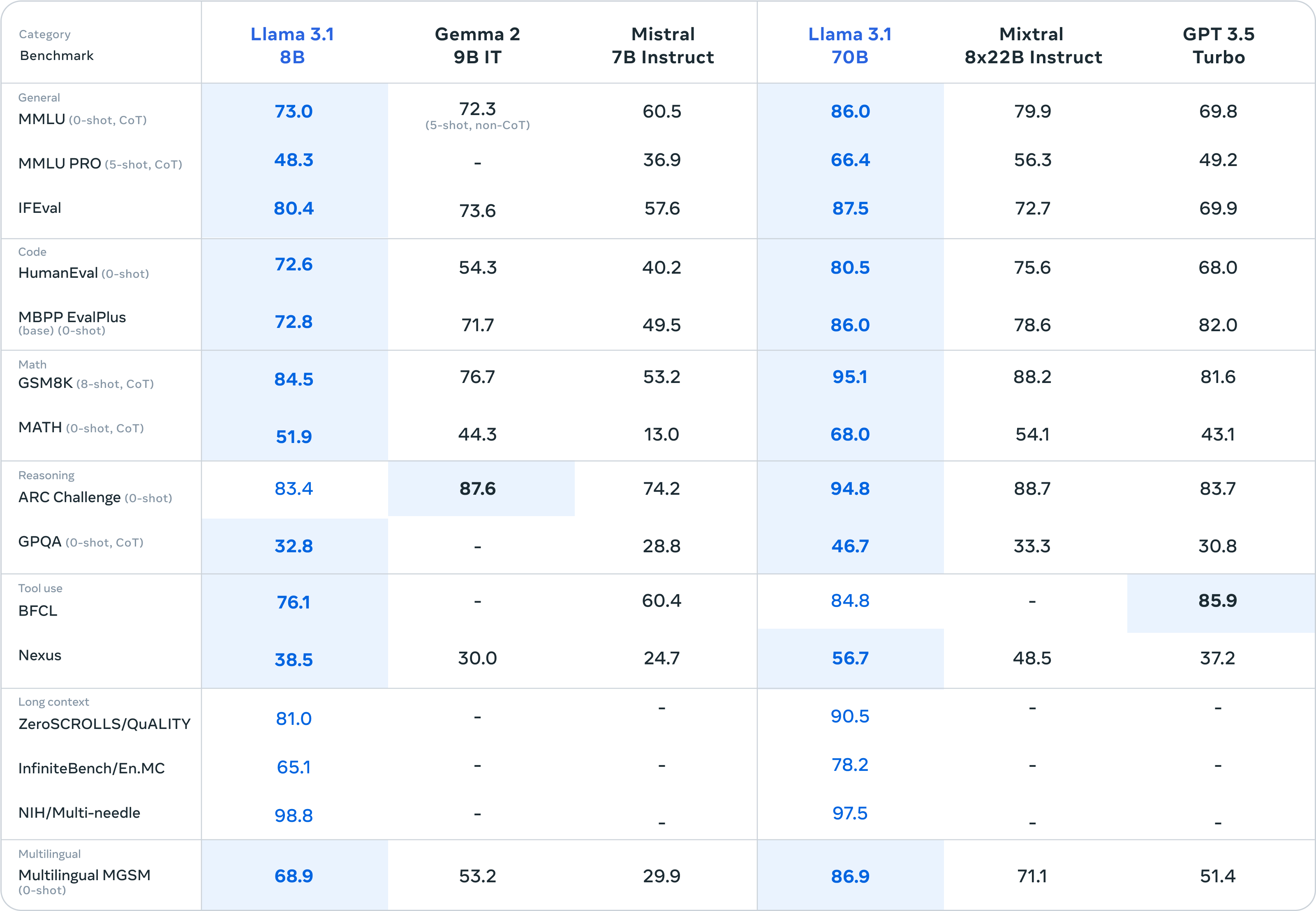

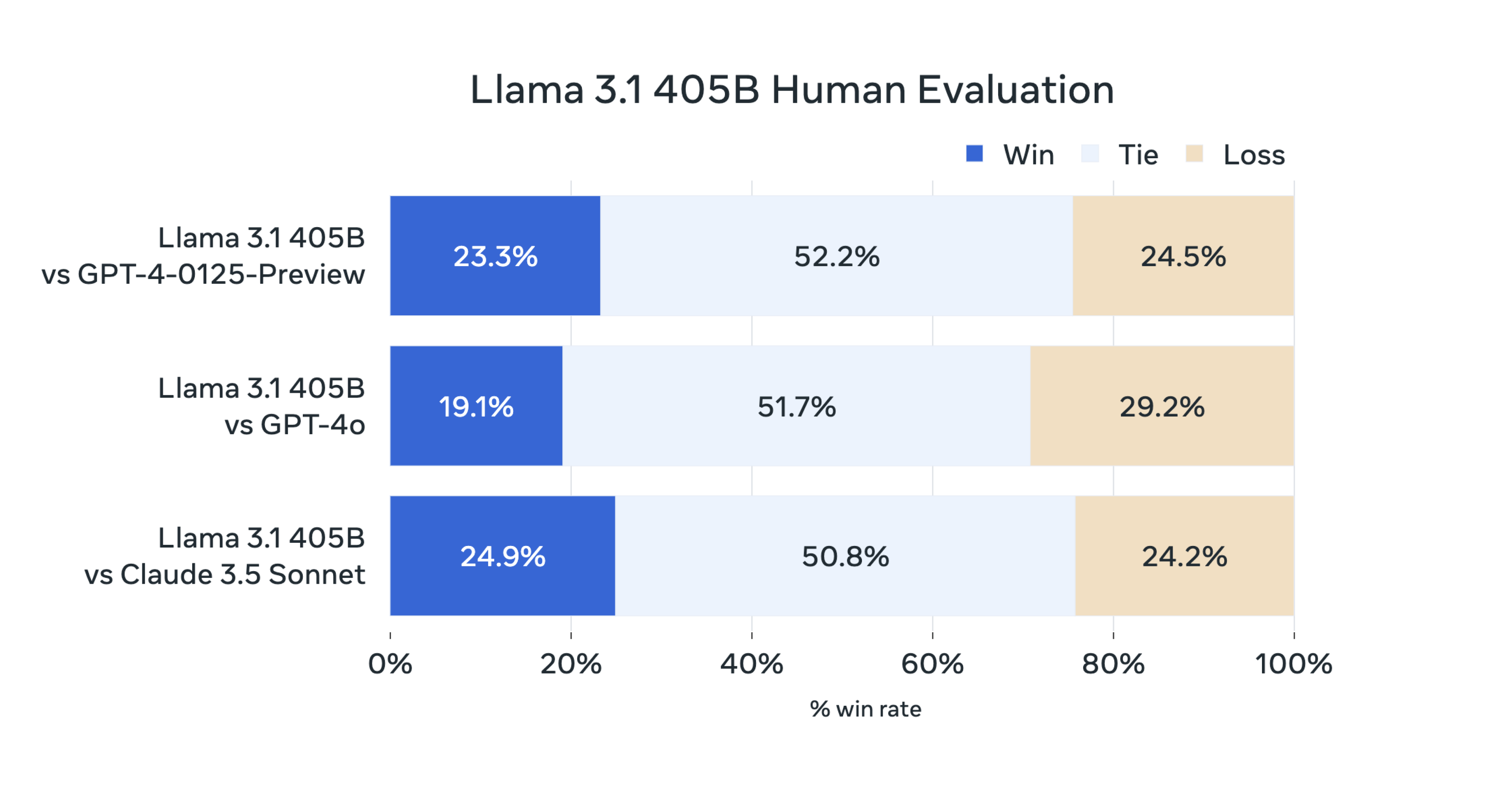

模型评估

本次发布中,Meta 已在 150 多个涵盖多种语言的基准数据集上评估了其性能。此外,Meta 还进行了大量的人工评估,将 Llama 3.1 与实际场景中的竞争模型进行了比较。Meta 的实验评估表明,我们的旗舰模型在一系列任务中,包括 GPT-4、GPT-4o 和 Claude 3.5 Sonnet,都与领先的基础模型相媲美。此外,Meta 的小型模型与参数数量相近的封闭式和开放式模型相比,也具有竞争力。