↑

概述

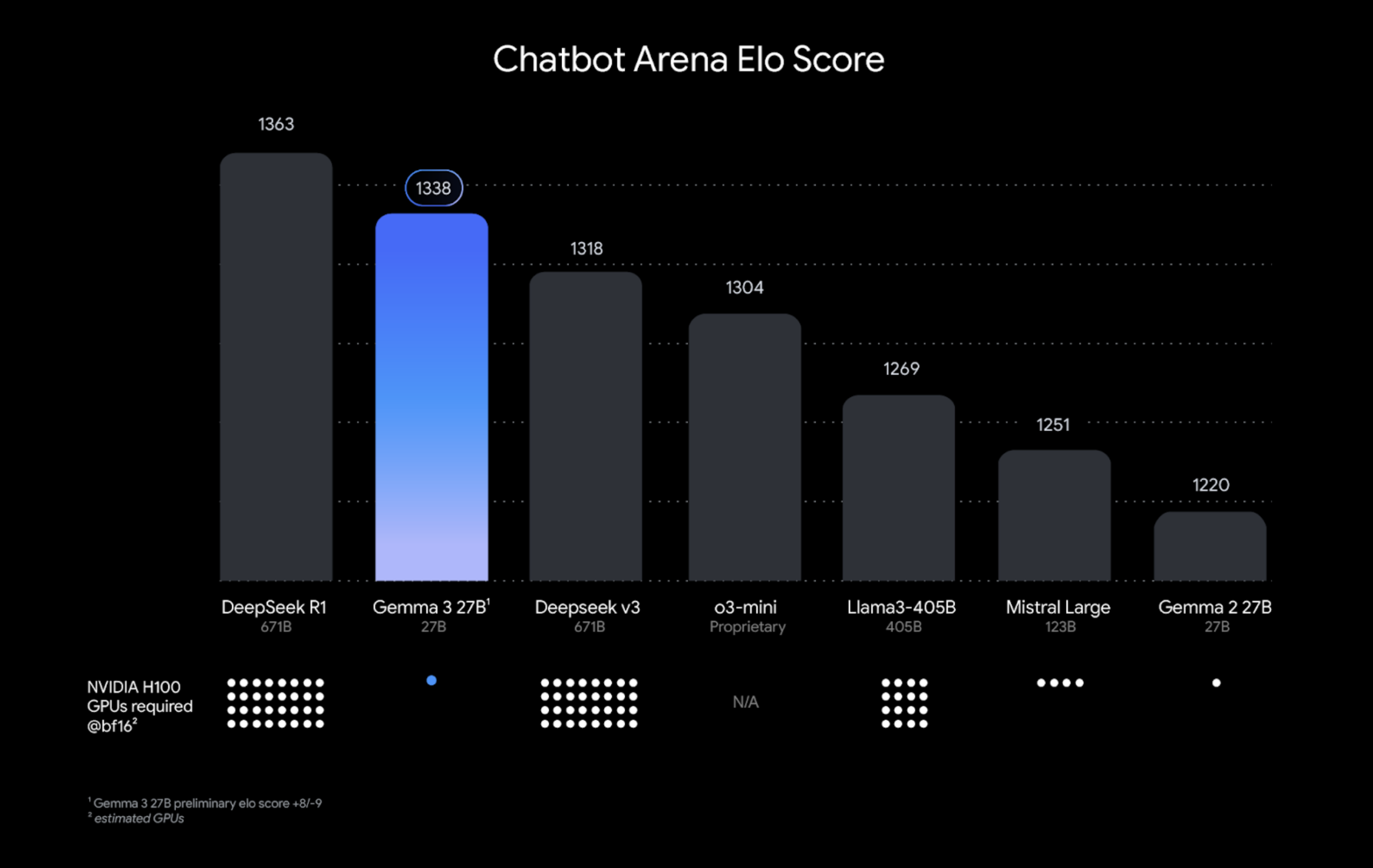

Gemma 是 Google 基于 Gemini 技术构建的轻量级模型系列。Gemma 3 模型是多模态模型(可处理文本和图像),并具有 128K 上下文窗口,支持超过 140 种语言。Gemma 3 提供 270M、1B、4B、12B 和 27B 的参数大小,在问答、摘要和推理等任务中表现出色,其紧凑的设计使其能够在资源有限的设备上部署。目前,Gemma3模型是单个 GPU 上运行功能最强大的模型。

模型

| 名称 | 尺寸 | 上下文 | 输入 | Ollama 下载命令 |

|---|---|---|---|---|

| gemma3:latest | 3.3GB | 128K | Text, Image | ollama run gemma3:latest |

| gemma3:270m | 292MB | 32K | Text | ollama run gemma3:270m |

| gemma3:1b | 815MB | 32K | Text | ollama run gemma3:1b |

| gemma3:4b | 3.3GB | 128K | Text, Image | ollama run gemma3:4b |

| gemma3:12b | 8.1GB | 128K | Text, Image | ollama run gemma3:12b |

| gemma3:27b | 17GB | 128K | Text, Image | ollama run gemma3:27b |

| gemma3:270m-it-qat | 241MB | 32K | Text | ollama run gemma3:270m-it-qat |

| gemma3:270m-it-q8_0 | 292MB | 32K | Text | ollama run gemma3:270m-it-q8_0 |

| gemma3:270m-it-fp16 | 543MB | 32K | Text | ollama run gemma3:270m-it-fp16 |

| gemma3:270m-it-bf16 | 543MB | 32K | Text | ollama run gemma3:270m-it-bf16 |

| gemma3:1b-it-qat | 1.0GB | 32K | Text | ollama run gemma3:1b-it-qat |

| gemma3:1b-it-q4_K_M | 815MB | 32K | Text | ollama run gemma3:1b-it-q4_K_M |

| gemma3:1b-it-q8_0 | 1.1GB | 32K | Text | ollama run gemma3:1b-it-q8_0 |

| gemma3:1b-it-fp16 | 2.0GB | 32K | Text | ollama run gemma3:1b-it-fp16 |

| gemma3:4b-it-qat | 4.0GB | 128K | Text, Image | ollama run gemma3:4b-it-qat |

| gemma3:4b-it-q4_K_M | 3.3GB | 128K | Text, Image | ollama run gemma3:4b-it-q4_K_M |

| gemma3:4b-it-q8_0 | 5.0GB | 128K | Text, Image | ollama run gemma3:4b-it-q8_0 |

| gemma3:4b-it-fp16 | 8.6GB | 128K | Text, Image | ollama run gemma3:4b-it-fp16 |

| gemma3:12b-it-qat | 8.9GB | 128K | Text, Image | ollama run gemma3:12b-it-qat |

| gemma3:12b-it-q4_K_M | 8.1GB | 128K | Text, Image | ollama run gemma3:12b-it-q4_K_M |

| gemma3:12b-it-q8_0 | 13GB | 128K | Text, Image | ollama run gemma3:12b-it-q8_0 |

| gemma3:12b-it-fp16 | 24GB | 128K | Text, Image | ollama run gemma3:12b-it-fp16 |

| gemma3:27b-it-qat | 18GB | 128K | Text, Image | ollama run gemma3:27b-it-qat |

| gemma3:27b-it-q4_K_M | 17GB | 128K | Text, Image | ollama run gemma3:27b-it-q4_K_M |

| gemma3:27b-it-q8_0 | 30GB | 128K | Text, Image | ollama run gemma3:27b-it-q8_0 |

| gemma3:27b-it-fp16 | 55GB | 128K | Text, Image | ollama run gemma3:27b-it-fp16 |

评估

Gemma 3 270M

| Benchmark | n-shot | Gemma 3 270m instruction tuned |

|---|---|---|

| HellaSwag | 0-shot | 37.7 |

| PIQA | 0-shot | 66.2 |

| ARC-C | 0-shot | 28.2 |

| WinoGrande | 0-shot | 52.3 |

| BIG-Bench Hard | few-shot | 26.7 |

| IF Eval | 0-shot | 51.2 |

这些模型针对大量不同的数据集和指标进行了评估,以涵盖文本生成的不同方面:

推理、逻辑和代码能力

| Benchmark | Metric | Gemma 3 PT 1B | Gemma 3 PT 4B | Gemma 3 PT 12B | Gemma 3 PT 27B |

|---|---|---|---|---|---|

| HellaSwag | 10-shot | 62.3 | 77.2 | 84.2 | 85.6 |

| BoolQ | 0-shot | 63.2 | 72.3 | 78.8 | 82.4 |

| PIQA | 0-shot | 73.8 | 79.6 | 81.8 | 83.3 |

| SocialIQa | 0-shot | 48.9 | 51.9 | 53.4 | 54.9 |

| TriviaQA | 5-shot | 39.8 | 65.8 | 78.2 | 85.5 |

| Natural Questions | 5-shot | 9.48 | 20.0 | 31.4 | 36.1 |

| ARC-C | 25-shot | 38.4 | 56.2 | 68.9 | 70.6 |

| ARC-e | 0-shot | 73.0 | 82.4 | 88.3 | 89.0 |

| WinoGrande | 5-shot | 58.2 | 64.7 | 74.3 | 78.8 |

| BIG-Bench Hard | — | 28.4 | 50.9 | 72.6 | 77.7 |

| DROP | 3-shot, F1 | 42.4 | 60.1 | 72.2 | 77.2 |

| AGIEval | 3–5-shot | 22.2 | 42.1 | 57.4 | 66.2 |

| MMLU | 5-shot, top-1 | 26.5 | 59.6 | 74.5 | 78.6 |

| MATH | 4-shot | — | 24.2 | 43.3 | 50.0 |

| GSM8K | 5-shot, maj@1 | 1.36 | 38.4 | 71.0 | 82.6 |

| GPQA | — | 9.38 | 15.0 | 25.4 | 24.3 |

| MMLU (Pro) | 5-shot | 11.2 | 23.7 | 40.8 | 43.9 |

| MBPP | 3-shot | 9.80 | 46.0 | 60.4 | 65.6 |

| HumanEval | pass@1 | 6.10 | 36.0 | 45.7 | 48.8 |

| MMLU (Pro COT) | 5-shot | 9.7 | NaN | NaN | NaN |

多语言能力

文件 1:多语言 / 文本任务

| Benchmark | Gemma 3 PT 1B | Gemma 3 PT 4B | Gemma 3 PT 12B | Gemma 3 PT 27B |

|---|---|---|---|---|

| MGSM | 2.04 | 34.7 | 64.3 | 74.3 |

| Global-MMLU-Lite | 24.9 | 57.0 | 69.4 | 75.7 |

| Belebele | 26.6 | 59.4 | 78.0 | — |

| WMT24± (ChrF) | 36.7 | 48.4 | 53.9 | 55.7 |

| FloRes | 29.5 | 39.2 | 46.0 | 48.8 |

| XL-Sum | 4.82 | 8.55 | 12.2 | 14.9 |

| XQuAD (all) | 43.9 | 68.0 | 74.5 | 76.8 |

多式联运能力

| Benchmark | Gemma 3 PT 4B | Gemma 3 PT 12B | Gemma 3 PT 27B |

|---|---|---|---|

| COCO Captions (CIDEr) | 102 | 111 | 116 |

| DocVQA (val) | 72.8 | 82.3 | 85.6 |

| InfoVQA (val) | 44.1 | 54.8 | 59.4 |

| MMMU (pt) | 39.2 | 50.3 | 56.1 |

| TextVQA (val) | 58.9 | 66.5 | 68.6 |

| RealWorldQA | 45.5 | 52.2 | 53.9 |

| ReMl | 27.3 | 38.5 | 44.8 |

| AI2D | 63.2 | 75.2 | 79.0 |

| ChartQA | 45.4 | 60.9 | 63.8 |

| ChartQA (augmented) | 81.8 | 88.5 | 88.7 |

| VQAv2 | — | — | — |

| BLINK | 38.0 | 35.9 | 39.6 |

| OKVQA | 51.0 | 58.7 | 60.2 |

| TallyQA | 42.5 | 51.8 | 54.3 |

| SpatialSense VQA | 50.9 | 60.0 | 59.4 |

| CountBenchQA | 26.1 | 17.8 | 68.0 |